By Matt Boyd & Nick Wilson

TLDR/Summary

- The 2026 fuel crisis was not a surprise. New Zealand’s (NZ) extreme liquid fuel vulnerability was identified years ago, yet the country still lacked onshore resilience measures, pre-agreed rationing frameworks, prioritisation among critical consumers, or decision thresholds when Hormuz closed.

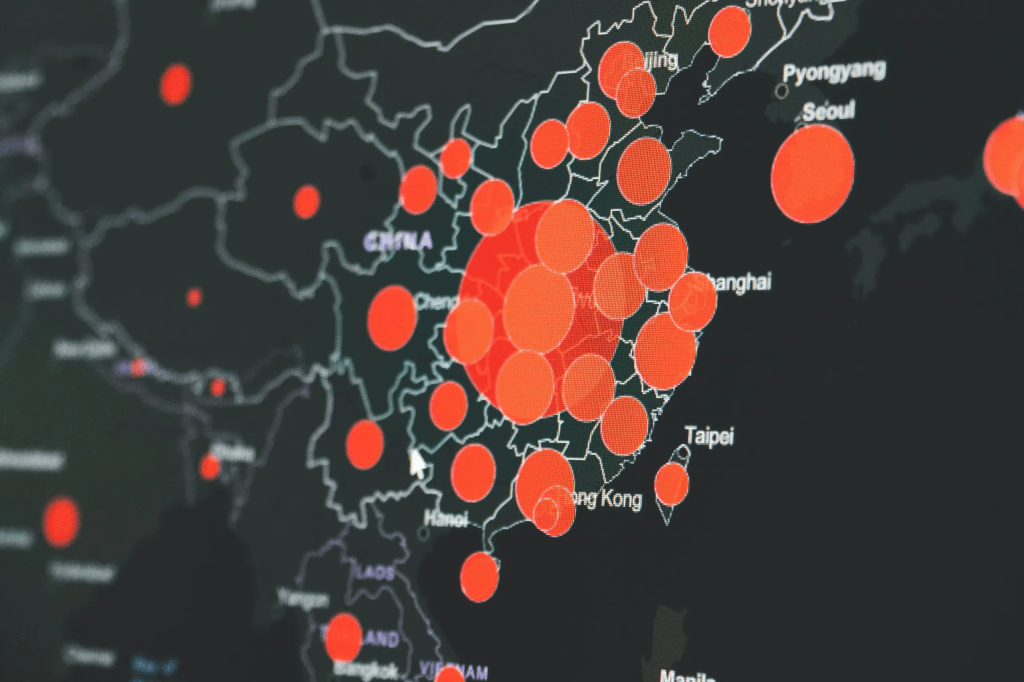

- As with Covid-19, the risk was known. The failure was institutional: no living public risk register, no ready-to-deploy clear decision frameworks, no democratic authorisation of the assumptions behind preparedness measures.

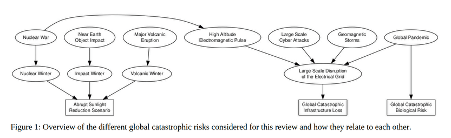

- But fuel and Covid are not the story. They are symptoms. NZ faces a wide portfolio of national risks: supply chain disruption, satellite and communications failure, food shocks from sunlight reduction (e.g. nuclear or volcanic winter), fertiliser shortage, pandemics, cyberattack, and more. Each has a catastrophic scenario, yet there is no unified, public-facing system for assessing these risks together.

- Our research identified three core failures in national risk assessment: systematic exclusion of global catastrophic risks; opaque assumptions that lack public authorisation; and a failure to ever ask how things could have been worse – the downward counterfactual discipline essential for calibrating how far short of adequate our current preparations actually are.

- “National risk register” is an outdated frame in a world of interdependent global stresses. What NZ needs is a hazard-agnostic National Vulnerability Register, focused on what our critical systems are actually exposed to across all hazards, paired with a costed National Mitigation Register of options to address those exposures.

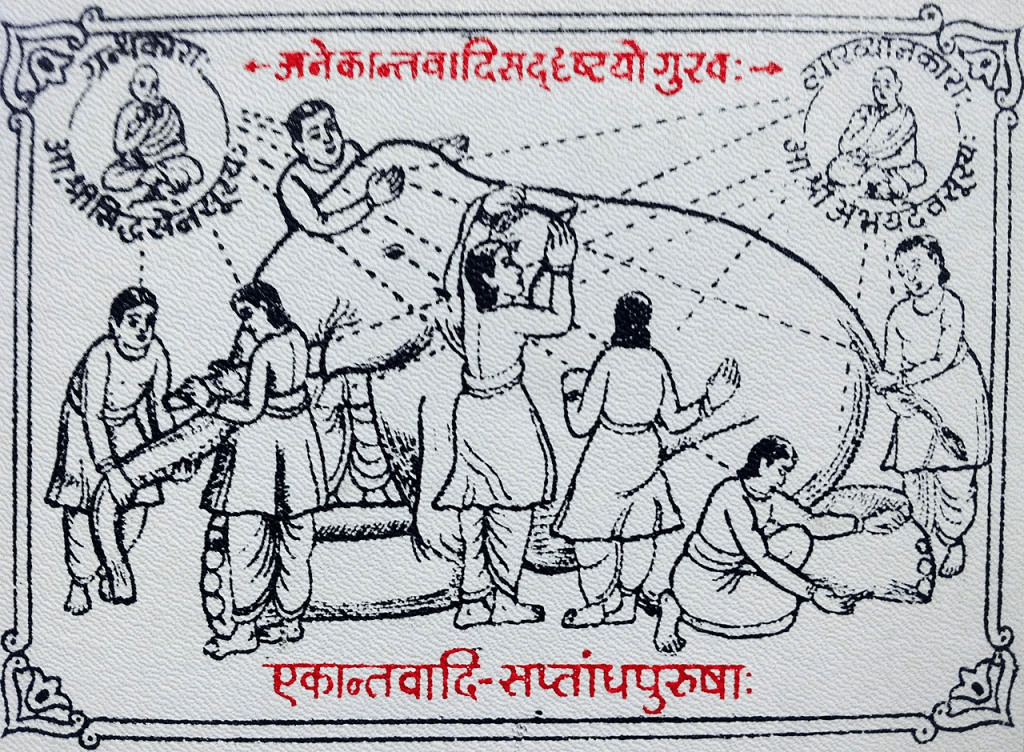

- This cannot remain a technocratic exercise. Advancing technology makes genuine two-way public engagement feasible, enabling crowd-sourced analyses, and worked resilience options, from civil society, NGOs, and researchers to surface and feed into real deliberation. Democracy has a measurable protective effect in crises; that advantage must be actively cultivated, not squandered.

- All of this requires independent institutional stewardship: a Parliamentary Commissioner for Catastrophic Risk, charged with ongoing oversight of whether NZ is actually prepared, not just whether it coped with the last crisis.

A crisis that was never a surprise

On 28 February 2026, coordinated US and Israeli strikes on Iran triggered a conflict that rapidly closed the Strait of Hormuz. Within days, petrol hit NZ$3 per litre and stations began running dry. There is now legitimate concern about the ongoing security of NZ’s liquid fuel supply.

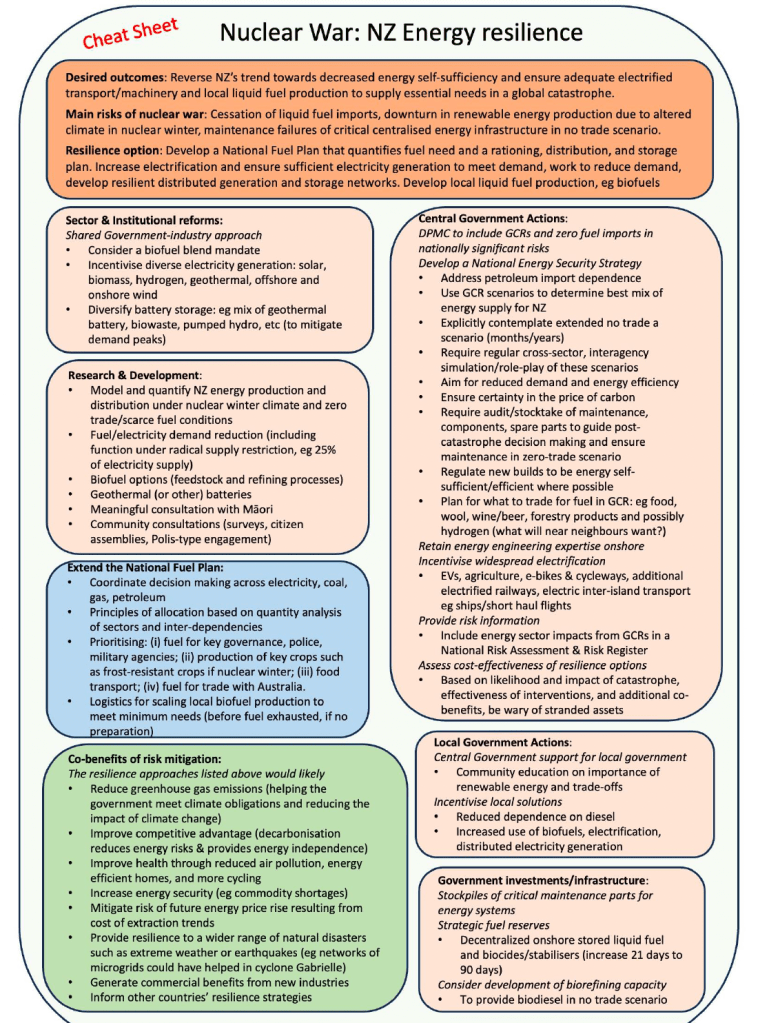

None of this is a surprise. NZ imports essentially all of its refined liquid fuel from South Korea and Singapore, refineries sourcing crude oil via tankers through the Strait of Hormuz. MFAT modelled this scenario explicitly in July 2025. Our own 2023 research identified liquid fuel as NZ’s most critical strategic vulnerability in major global conflict, and our Beyond 90 Days analysis of the Government-commissioned 2025 Fuel Security Study estimated that under catastrophic disruption, onshore stocks could last approximately 160 days with severe rationing for essential services only, a finding the Study itself obscured. The first step towards a solution, the NZ Fuel Security Plan, was published only in November 2025, too late to substantially affect preventive and planning action for the current fuel crisis.

The question is not whether anyone saw this coming. Many did. The question is why, when the risk was known, NZ lacked a credible public risk register, naming this vulnerability, quantifying it, listing resilience and mitigation options, and enabling a mature public conversation about where to invest resources in anticipation. Four things the government has done since the start of the crisis could and should have been done before the crisis materialised:

- Quantification (eg of fuel volumes and usage rates, for essential services, etc)

- Stratification and prioritisation (across the ‘critical users’ listed in the NZ Fuel Plan)

- Informing the Public (about the risk, the plans, the quantification and stratification, and importantly, what vulnerabilities yet remained)

- A call for research, analysis, and considered solutions (as with the new ‘tip line’ for letting decision makers know where the obstacles are to an efficient fuel crisis response)

We need to learn from this crisis and generalise these four steps, in anticipation, across all of NZ’s major vulnerabilities.

We have been here before. Coronavirus pandemics were identified as a ticking time bomb after the SARS pandemic in 2003. Before Covid-19, we published work on the benefits of border closure for island nations, not with all the answers, but as a framework for thinking through what was measurable, what was uncertain, and when action thresholds might be triggered. That work proved useful when the pandemic hit. But even when relevant research exists, if it is not embedded in a living, publicly accessible register connected to government decision-making, it remains on the margins.

Broaden the debate

There is now enormous public discussion about the immediate fuel response: rationing tiers, critical consumer lists, stock levels, tanker movements. This is necessary. But it is not sufficient, and it risks repeating the Covid trap of obsessing over the specifics of the last crisis while the next one loads.

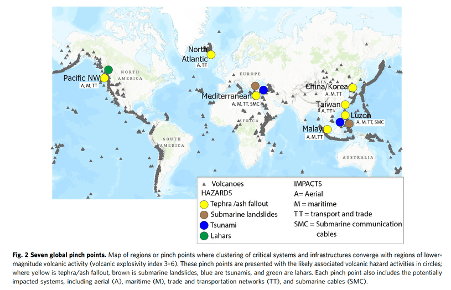

NZ is exposed to a wide portfolio of risks beyond complete dependence on liquid fuel imports. Disruption to global shipping affects far more than petrol, diesel and jet fuel. There could be attacks, or natural hazards, causing damage to undersea communications cables or satellite infrastructure which would cripple financial systems, supply chains, and emergency coordination simultaneously. Abrupt sunlight reduction, from a major volcanic eruption or nuclear war, would devastate food yields regardless of fuel supplies. Fertiliser shocks, extreme pandemics, grid-disabling cyberattacks or geomagnetic storms, and cascading financial instability are all plausible within planning horizons. Our 2023 NZCat report was built around three realities that are now visibly materialising:

- The most dangerous risks originate elsewhere (outside NZ) and spread to affect the entire world;

- We face potential destruction, not just disruption, of critical global infrastructure;

- War is the defining feature of human history, not an aberration to be planned around.

The Hormuz crisis should not simply provoke a fuel plan. It should provoke a national conversation about risk and vulnerability in general, one that is systematic, comprehensive, and democratic.

Three failures in how NZ society assesses national risk

Our peer-reviewed research identified two core and recurring deficiencies in national risk assessments. We now add a third.

- First, national risk assessments systematically exclude global catastrophic risks, high-consequence events most likely to cause civilisation-scale harm. The Hormuz crisis is a partial example; scenarios involving nuclear war, extreme pandemics, or major volcanic eruptions at global logistics pinch points, are more severe still, and increasingly plausible.

- Second, national risk assessments lack public authorisation of their underlying assumptions. Scenario choice matters enormously: a 10% fuel disruption for six months is a fundamentally different problem from a 100% supply shock lasting a year, with radically different implications for what mitigation looks rational. Time horizons, discount rates, decision rules, and what is actually most valued by citizens, all shape conclusions. When assumptions are opaque, the public cannot evaluate the risk picture, contribute knowledge that might improve it, or express informed preferences about how to best invest public resources in resilience.

- Third, and currently absent from the national conversation, risk assessments never ask how things could have been worse. Governments tend to evaluate a response by whether it held, then tick the box and move on. But the right question is: what would it have taken to overwhelm this response? What if Covid-19 had been far more lethal? What if most of the oil infrastructure in the Middle East had been destroyed? What if nuclear weapons had been used in the current conflict, or Hormuz had closed for two years rather than months? This downward counterfactual discipline is essential for calibrating how far short of adequate our current preparations actually are. A government that only asks “did we cope?”, will typically conclude it “did the best that could have been expected”.

These three societal failures reflect a shared institutional pathology: risk assessment is treated as a technocratic exercise rather than a democratic one, producing documents that circulate among officials rather than living tools that connect citizens to the realities of the world and the choices their government faces on their behalf.

From risk register, to vulnerability register, to mitigation register

It is worth being precise about what we actually need, because “national risk register”, typically presented as a list of hazards, is an outdated frame.

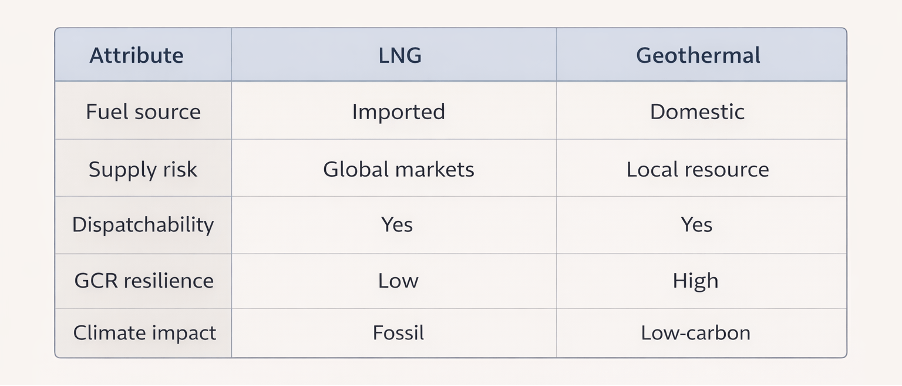

Many different hazards, including trade disruption, tariffs, electromagnetic pulse, geomagnetic storm, war, pandemic, can all produce the same outcome: a catastrophic reduction in liquid fuel available to NZ. The common factor is not the hazard but the vulnerability: NZ’s near-total dependence on imported liquid fuel for almost everything.

What we need is a National Vulnerability Register, that is hazard-agnostic (though extracted in part from a detailed study of hazards) and focused on what our critical systems are actually exposed to.

Then this should be paired with a National Mitigation Register: ideally costed, a comparable menu of options that could patch those vulnerabilities, or at least take the edge off anticipated impacts, so that basic needs such as food, water, communication, and critical goods transport, can still be met for all citizens.

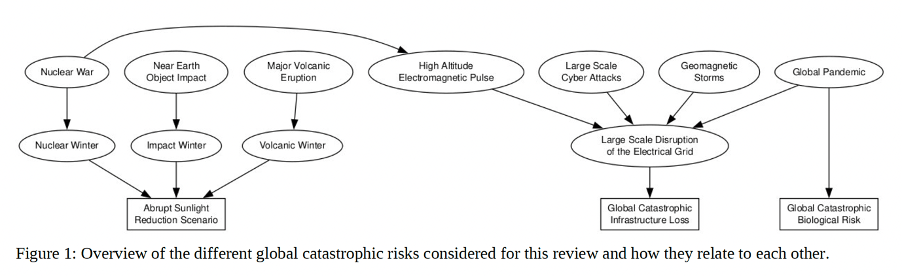

Our peer-reviewed 2025 Policy Quarterly paper argued for moving beyond a hazard-by-hazard approach entirely, adopting a systems and complexity lens that accounts for cascade dynamics, interdependencies, and the polycrisis nature of global risks. This matters because the truly catastrophic scenarios, should they ever occur, and whatever their origin, tend to cause harm through one of three common pathways (see Figure below):

- Global catastrophic infrastructure loss (eg, electricity, liquid fuel, internet, shipping, etc);

- Abrupt sunlight reduction (eg, nuclear winter, volcanic winter);

- Pandemic disease.

A register organised around vulnerabilities and common pathways, such as these, is far more meaningful and useful than one organised around individual hazards assessed in isolation. Furthermore, cost-benefit is more properly understood when all possible causes of some harm are collapsed into one aggregate likelihood.

Although a classified NZ national risk assessment exists, the publicly facing material is woefully inadequate (eg, see the annex in DPMC/MfE’s 2025 long-term insights briefing on resilience to hazards). Liquid fuel supply merits a single phrase under ‘Significant disruption or failure of critical infrastructure.’ There are no detailed scenarios, no cascade consequences, no mitigation options, no indication of what preparations are still absent. This is not just a transparency problem, it is a democratic deficit.

The two-way tool we need, and can now build

In our 2023 paper we argued for a two-way engagement mechanism: not just broadcasting risk information downward (in highly redacted form), but actively developing the risk picture through structured public input. That argument is now more achievable than ever.

Advancing technology, including the capacity of large language models to consume, synthesise, and be trained on domain-specific material, means that contributions from businesses, community organisations, academics, researchers, NGOs, and individual citizens can be collated and synthesised into a structured set of options without requiring officials to read every submission individually. The specific platform architecture matters less than the principle: there are multiple ways to instantiate this effectively, and the design should be decided through consultation. What matters is that such a system is produced, is genuinely open, and generates outputs that informs meaningful deliberation and real decisions.

This is effectively the fuel crisis ‘tip line’ writ large, formalised, and generalised across all risks, including global catastrophic risks.

This is not just about information quality. It is about whose voice gets heard. A government-only process will reflect government-of-the-day priorities, official assumptions, and industry-captured analysis (it is frequently infrastructure lobbyists who make the most detailed submissions on resilience consultations).

A properly designed public engagement process would inform the public with details, and surface what NGOs like Wise Response, EatNZ, and the NZCat project have already developed: costed analyses of food security, biodiesel production capacity, essential service fuel allocation, and more. Pooling such analyses creates a genuine, comparable menu for informed and democratic decision-making. It also means that when deliberation occurs, citizens are not dependent on information filtered by any ideologically trapped government-of-the-day, or the ‘usual consultants’. The needed imagination can be expressed.

From risk catalogue to democratic decision

The deeper purpose is not transparency for its own sake. It is to enable a deliberative democratic process for directing resilience investments and building social licence for the outputs. Increasing the variety of problem-solving frames and ideas across society is grounded in evidence in the polycrisis literature, and there is democratic advantage in crises.

NZ is not short of known risks (we compiled a list of the global catastrophe hazards in our 2023 NZCat report, see p.101–3). What NZ lacks is a structured, publicly accountable process for deciding which to mitigate, how, at what cost, and to what agreed level.

A critical shortcoming of most risk registers is that they stop at listing risks, effectively declaring “we’ve got this covered.” They rarely detail what more is needed, or desired, that is not already in place, what it would cost, or what benefits would follow. Connecting a vulnerability assessment to a costed action menu is precisely the step that turns a register into improved outcomes with societal acceptability.

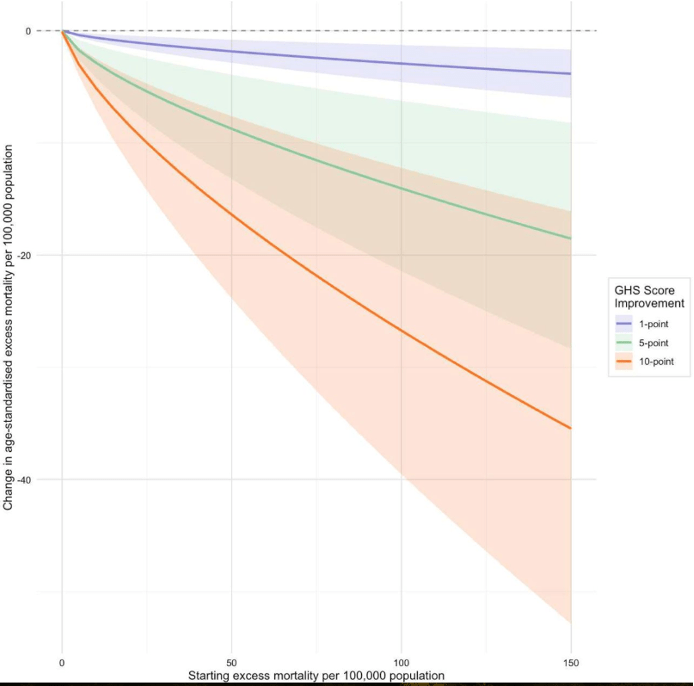

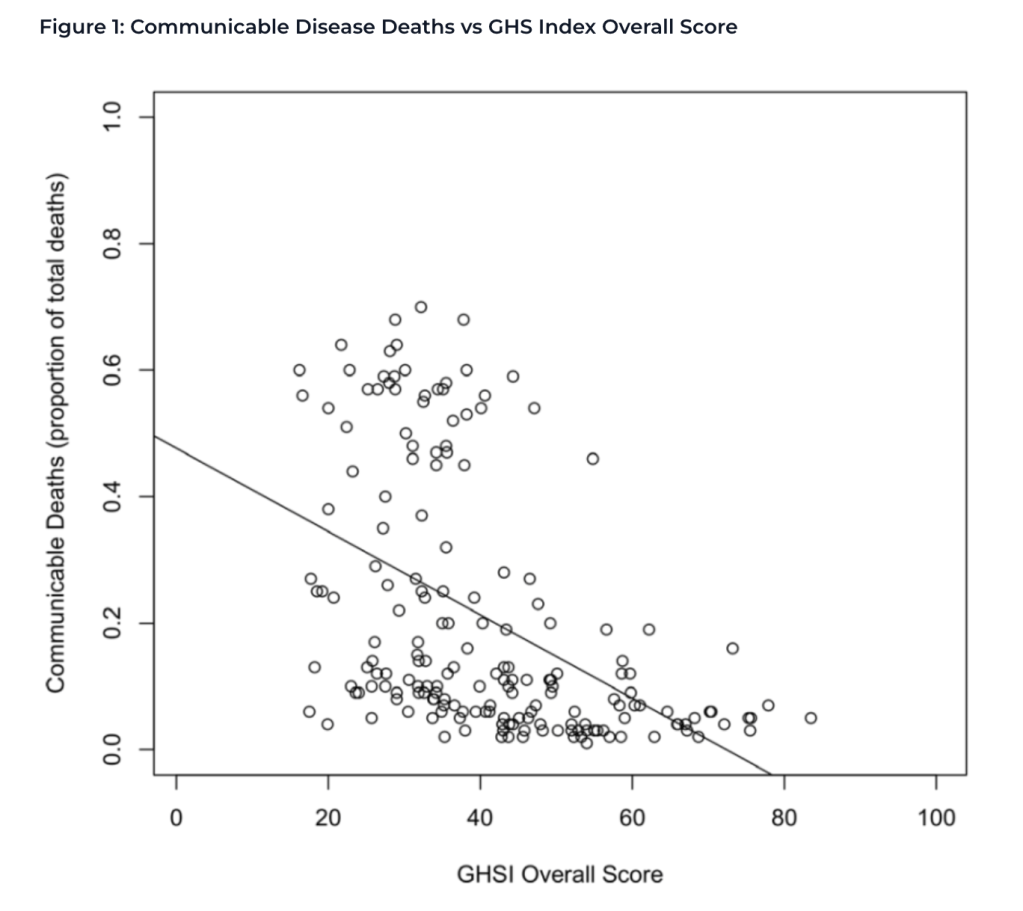

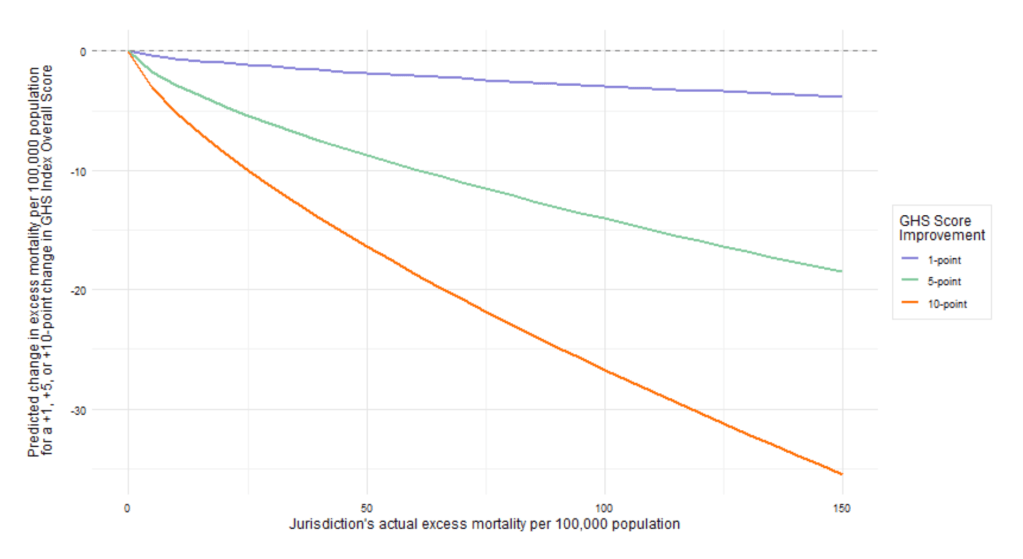

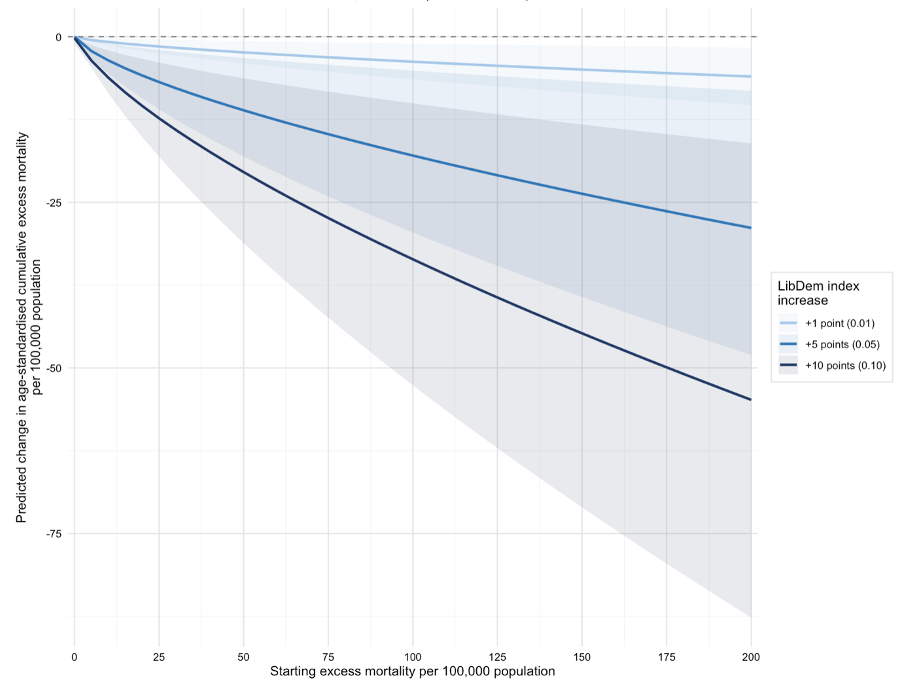

That menu must feed into a process of genuine democratic deliberation. Our analysis of Covid-19 outcomes showed clearly that democracy, particularly in island nations, strongly predicted fewer deaths (the Figure below shows the modelled reduction in excess deaths for a given increase in democracy score, based on Covid-19 outcome data).

The democratic advantage is real. And yet trust in government institutions is eroding across democracies. Rather than accepting this, the NZ Government should actively counter it: publishing assumption-transparent risk and vulnerability assessments, supporting citizens’ assemblies where the assumptions, and resilience trade-offs can be debated in depth by informed, representative groups, and empowering those groups to reach conclusions that carry democratic weight.

This is not naïve idealism. It is what properly functioning democracy looks like when facing hard choices, in a complex world, with real costs and trade-offs. Citizens need the tools to decide collectively how to manage this transition.

That is precisely what a National Vulnerability Register, connected to a National Mitigation Register and genuine public deliberation, makes possible.

A Parliamentary Commissioner for Catastrophic Risk

All of this requires independent institutional stewardship. We renew our recommendation from the NZCat report: NZ should establish a Parliamentary Commissioner for Catastrophic Risk (PCCR), an independent officer of Parliament charged with overseeing the national risk and vulnerability assessment process, scrutinising its assumptions, ensuring global catastrophic risks are included, and assessing whether public engagement mechanisms are genuinely democratic and effective.

The PCCR would provide what no current NZ institution does: ongoing, independent, publicly accountable oversight of whether NZ is actually prepared, not just whether it coped with the last crisis. In an election year, this is a concrete institutional reform worth demanding.

Building resilience for a world of iterated shocks and polycrisis

We need to be clear-eyed about the broader context. The Global Shield risk policy initiative has noted that the world appears to have entered a new risk paradigm in the late 2010s and early 2020s, and that core assumptions of 20th-century governing, long planning horizons, slow policy processes, siloed expertise, implicit institutional trust, fiscal capacity to recover, may simply be inadequate for the decades ahead.

This is in a context where increasing and inexorable stress across a wide-range of global systems raises the possibility of systemic risk or critical system collapse, this trajectory is likely to be punctuated at abrupt points by the kinds of catastrophes discussed above. However many resilience decisions and investments will benefit both chronic break-down and sudden crisis scenarios.

NZ cannot put its faith in restoring pre-Covid growth trajectories or consuming the same volumes of imported energy we have relied on. What is needed is the opposite of fragile complexity: modularity, redundancy, diversity, decentralisation, and simplification of our critical systems. Shorter supply chains, local production capacity for essentials (eg, at least one biofuel refinery), redundant infrastructure, closer cooperation with Australia and Pacific neighbours (eg on vaccine manufacturing and sovereign shipping assets), and distributed decision-making. Building this kind of generalised resilience, which defends against many risks simultaneously, requires democratic buy-in, because it involves real trade-offs and real costs. But the upshot is that New Zealanders will suffer less anxiety and harm whenever global catastrophe strikes – they might even keep thriving.

We cannot achieve this through ad hoc action, trying to put out one fire at a time, especially when our vulnerabilities are correlated: a single geopolitical rupture can simultaneously threaten fuel, food, communications, and financial stability. A systematic approach, drawing on the research and ideas already distributed across NZ’s research community, civil society, and private sector, is the only answer.

The Hormuz crisis will likely eventually resolve. But the next systemic shock will come, be it a pandemic, nuclear event, volcanic eruption, technological catastrophe, or another geopolitical rupture. The time to build the vulnerability register, the mitigation menu, the deliberative tools, and the resilience NZ actually needs is now, before the next crisis reminds us, once more, that we already knew… or even prevents NZ achieving the resilience it needs.