In this post, I summarise and review the book What’s the worst that could happen?: Existential risks and extreme politics – by Australian politician Andrew Leigh (published 9 Nov 2021)

TLDR the TLDR: Populism enhances x-risks

TLDR: Andrew Leigh argues that the short-sighted politics of populism has enhanced existential risk. Populism is driven by problems of jobs, snobs, race, pace and luck. We can control populism by strengthening democratic systems and being stoic. However, Leigh says little about addressing the causes of populism itself.

Intro and purpose of the book

Australian Labour Party representative Andrew Leigh used his time during the Covid-19 disruptions to write a short book on existential risks to humanity (x-risk).

In the book, Leigh leverages longtermist thinking, describes the various x-risks (ie extreme pandemics, climate change, nuclear war, artificial superintelligence, environmental degradation, asteroid/comet impact) and couples this with observations about the rise of populist politics.

He concludes that not only does populism raise the probability of totalitarian dystopia, but it undermines our ability to prevent and mitigate x-risks generally.

In what follows I outline his approach chapter-by-chapter and conclude with thoughts of my own.

Why the future matters

The first chapter starts from the premise that a future utopia is inevitable if humanity survives long enough. This claim emerges by extrapolating a historical trajectory from our tough Iron Age existence through to modern comforts, and then beyond. Leigh rejects discounting the value of future lives, ‘discounting at a rate of 5 percent implies that Christopher Columbus is worth more than all 8 billion people alive today’ (p.8). Similarly, our lives today are no more important than those of future generations and we have a responsibility to those generations.

Leigh notes, however, that our survival is not a given and points out that someone’s risk of dying from an extinction event is higher than many other common risks. He provides psychological (availability heuristic) and economic (campaign contributions) reasons why policy is biased against preventing human extinction.

This situation is exacerbated by the rise of political populism. Leigh defines populists as those who claim to represent ‘the people’ in a challenge to ‘the elites’ who are painted as dishonest or corrupt. Populists can represent the left or right of the political spectrum, have little respect for experts, and tend to champion immediate priorities. They are ‘drivers distracted by back seat squabbles’ (p.14).

Leigh’s use of accessible metaphors involving bar fights, stolen wallets, and football make this introduction simultaneously an easy read, and the most Australian work on x-risk to date.

The next five chapters survey the threats from biorisks, climate change, nuclear war and artificial superintelligence as well as a chapter on probability and risk. Leigh describes each risk and canvasses a suite of standardly recommended policy options specific to them. For those not familiar with such background the chapters are an easy introduction to x-risks.

‘For each of the existential risks we face, there are sensible approaches that could curtail the dangers. For all the risks we face, a better politics will lead to a safer world’ (p.15).

Biothreats

Biological threats, both naturally occurring and human-created, are the focus of Chapter 2. Leigh uses historical examples (plague, cholera, pandemics, biological attacks), tabletop simulations (Clade X, Event 201), and popular culture, ‘someone doesn’t have to weaponize the bird flu–the birds are already doing that,’ says Lawrence Fishburne’s character in Contagion (p.22). Leigh calls out biological weapons programmes, those that skirted the law (the Soviet Union, Saddam Hussein) and acquaints the reader with the risks of synthetic biology.

Repeatedly, Leigh refers to popular fiction to make his points. Anecdotally, biotech entrepreneur Craig Venter recommended the book The Cobra Event, to Bill Clinton and this influenced biorisk policy.

Leigh highlights Scott Galloway’s observation that since more people die from disease than war, it might be reasonable to trade the CDC’s $7 billion budget for the Pentagon’s $700 billion budget (this made me reflect on general criticism of the military-industrial complex as a driver of runaway military spending, based around lobbying and vested interests. One possible future sees Boeing and Lockheed Martin coaxed into healthcare technology, generating the same revenues but with new focus). The chapter ends with a catch-all summary of previously published strategies for minimizing biothreats.

Climate change

Chapter 3 surveys the issue of climate change. There is only so much that can be said in 21 pages (compare the 40,000 people who attended COP26 recently, and the gigabytes of documents flying around). It is noted that likely increases of 3–4 degrees C will be experienced by 2100, but importantly modelling suggests a 10 percent probability of 6 degrees C. If various ‘tipping points’ and ‘carbon bombs’ (p38–39), lead us to this unlikely but possible destination, then things could get very bad.

In this chapter Leigh really starts to escalate his hints that populist politics is exacerbating x-risk. He cites the actions of Donald Trump and Jair Bolsonaro. However, Leigh also notes that mitigations against climate change don’t hinge on longtermism, there are here-and-now economic reasons to act, as well as common ground across the political spectrum such as tree planting, and efficiency standards.

Nuclear weapons

Chapter 4 conveys the precariousness of the nuclear stalemate. We read the stories of close calls such as the Cuban missile crisis and the role of Vasili Arkhipov in averting nuclear disaster. Tales from Dr Strangelove introduce us to mutually assured destruction (MAD) and the Russian ‘Dead Hand’ retaliatory mechanism.

Leigh argues that the increasingly many nuclear powers make it mathematically more likely there will be conflict. Nuclear conflict could also begin if a terrorist nuclear attack gave the appearance that a nuclear power had launched a strike. These scenarios could lead to a possible nuclear winter and agricultural failure. Again, the actions of populists such as Trump (withdrawing from the Iran nuclear deal) tend towards further destabilisation.

A survey of actions to minimize nuclear risk is given, with Leigh advocating a ‘Manhatten Project II’ (p.72), to denuclearize the world. Although, there is no mention of the economics of the nuclear weapon industry and how vested economic interests might sustain the number of weapons. However, Leigh wittily notes that, ‘By sending Dead Hand to the grave, Russia would make the planet a safer place’ (p.71).

Artificial Intelligence

The chapter on AI again follows fairly standard exposition of the risks that superintelligence poses. Hooking the reader into the looming power of AI with further Aussie-as statements such as, ‘playing chess and Go against machines, humans have about the same chance of victory as a regular guy might have of winning a boxing match against Tyson Fury’ (p.76). We read about the ‘intelligence explosion’, the ‘control problem’, and the ‘King Midas’ problem. True to form as a former professor of economics and now politician Leigh notes that, ‘the problem of encoding altruism into a computer is akin to the challenge of writing a watertight tax code’ (p.78).

Among several possible strategies for mitigating the risk of AI, Leigh introduces Stuart Russell’s notion that programmers should focus on, ‘building computers that are observant, humble and altruistic’ (p.84). Such machines would consult humans to learn what we want. As I’ve noted in a previous post, the problem here seems to be engineering the humans not the machines. In the end, Leigh suggests we may need enforceable treaties to ensure embedding of human values, banning of autonomous killing, and promoting collaboration over competition to manage the emergence of great intelligence, not if, but when it occurs.

What are the odds?

Chapter 6 consists of a brief survey of other x-risks, as well as analysis of their probabilities when compared to a range of common risks. It is in this chapter that I felt like Leigh made two mistakes.

Firstly, he expresses the probability of the risks discussed in Chapters 2 to 5 and other risks such as comet/asteroid impact, supervolcanic eruptions, and anthropogenic degradation of the environment, in terms of probability across a century, but then compares these to the ‘reader’s risk of dying from X, in the next year’. This comparison of apples and oranges is flawed for two reasons:

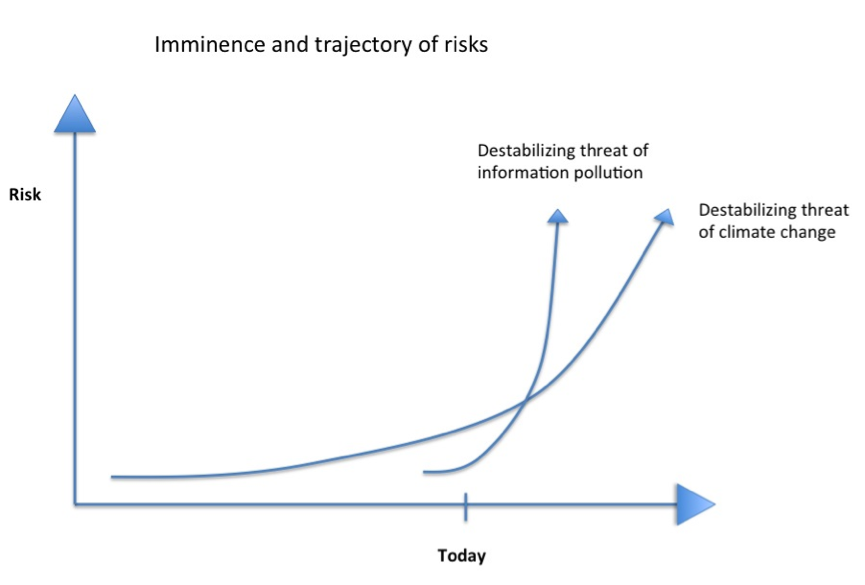

- The probability of the anthropogenic risks, in particular, is non-stationary. For example, the risk of AI killing us all is not 1 in 1000 next year (as 1 in 10 ‘this century’) would imply. It is far lower at present, but likely to rise far higher, per annum, across time (until we control it or succumb to it). This reasoning does not necessarily apply to the natural risks, although see posts such as this one arguing that volcanic risk is rising.

- Psychologically, it makes more sense to compare per century risks of catastrophe with per lifetime rather than per annum risks to personal wellbeing.

The second mistake I felt Leigh made is to introduce a hodgepodge of risks that have a greater probability in his estimation (which is based on Toby Ord’s), than some of the risks to which he has devoted entire preceding chapters. For example, cascading ecosystem failures appear to have a higher probability of causing human extinction than nuclear war. Arguably, there is some internal inconsistency of communication and emphasis in the book (though I certainly recognise nuclear threat as major).

As an aside, I’ve always found it interesting that the number of humans killed by ‘all natural disasters’ (eg flood, storm, earthquake, volcano, tsunami), as reported by Our World in Data, is approximately 60,000 per year, whereas crude annualization of x-risk probabilities as gestured toward by Leigh (though not made explicit in the book) suggest that the following risks are all greater in expected fatalities than all natural disasters combined: unaligned AI (8 million annualised deaths in expectation), engineered pandemic (2.7 million), nuclear war (80,000), climate change (80,000), environmental damage (80,000). This seems to be another CDC vs Department of Defence situation. Where, really, should we be funnelling our resources?

Regardless of the rhetorical method, Leigh makes it clear that some unlikely risks, such as asteroid/comet impact are already receiving significant government attention. This, coupled with the argument I’ve just outlined, certainly indicates that national risk assessment (NRA) and national risk register (NRR) methodologies may not be fit for purpose.

The crux of the book

Leigh next turns to populism and its relationship to totalitarianism, a threat recognised by the x-risk community because it could permanently curtail the potential of humanity.

We should probably pay more attention to these two chapters, and the penultimate one on democratic systems. This is because Leigh is a politician with over a decade of experience in the Australian House of Representatives. Therefore, we might infer some insider insight into the machinations of power and government, and it would be wise to grant him the floor to express his concerns.

The populist risk

In the preceding chapter, Leigh began to hint at the risk of totalitarianism, but it is here in Chapter 7, the longest chapter, that this threat comes to the foreground. Reiterating the rise of populist politics in recent years, we are reacquainted with the populist’s quest to foster a conflict between, ‘a pure mass of people and a vile elite’ (p.103). Populists can arise from all sides of the political spectrum. Conceptually there are four quadrants:

| Left (equality) | Right (liberty) | |

| Internationalist | International egalitarians (Obama, Biden) | International libertarians (Romney, Bush) |

| Populist | Populist egalitarians (Sanders, Ocasio-Cortez) | Populist libertarians (Trump, Palin) |

Leigh claims that certain ideas have historically been particularly contagious. These include communism, capitalism, and populism. Furthermore, the world is becoming more populist in recent decades.

According to Leigh, there are five causes of this recent resurgence in populist politics:

- Jobs: low quality employment, work and wage insecurity

- Snobs: party elites who don’t take the populist threat seriously

- Race: fear of difference, impressionable masses responding to racist rhetoric

- Pace: rapid, disorienting, technological and cultural transformation

- Luck: some elections are very close and could have gone either way

On the issue of ‘snobs’, it certainly appears to me that elections have been stolen, but not as Trump claims, it’s by populists, from inflexible snobs! – see also Thomas Piketty’s arguments about the Brahmin Left and Merchant Right, left wing politicians now appeal to an educated class, rather than their traditional union base.

With respect to ‘luck’, I’m left wondering if this is a one-way valve. Do many populists try their hand, but only a few succeed by luck? However, once they then have power, they tend to rig the system to maintain their control.

Populism allegedly poses a threat to longtermism (and by implication x-risk mitigation), because populists:

- reject strong science

- reject effective institutions

- reject global engagement

- reject a sense of cooperation

However, the fact that populists are ‘anti’ these four things is the reason for their electoral appeal! Leigh proceeds to provide numerous examples of populists exhibiting these traits. Anti-internationalism in particular undermines hope of addressing x-risks, which by their nature may require global cooperation. Leigh argues that, therefore, the risk populism imposes on our future is greater than the risk it poses now.

To reiterate, Leigh is arguing that five issues (jobs, snobs, race, pace, luck) have led to the rise in populism and that populism increases the risk from x-risks, due to its short-term focus and ‘anti’ emphasis.

Totalitarianism – the death of democracy

In Chapter 8, we see the potential horror of widespread totalitarianism, which might emerge from the increasing hold that populism has around the world. Leigh says that widespread totalitarianism is, ‘not among [Toby] Ord’s top concerns, but does rank in mine’ (p.96). However, to me there is some equivocation over ‘totalitarianism’ in the works of Ord (The Precipice) versus the present book, with Ord focusing more on x-risk (permanently curtailing the future of humanity), whereas Leigh is more concerned with terrible national or regional states of affairs.

We are led through the histories of Adolf Hitler, Hugo Chavez, and Ferdinand Marcos, in a search for commonalities. What we discover is that these populists initially came to power through fair democratic processes, before devolving their democracies into authoritarian regimes. Recently, in Hungary and Turkey populist outsiders similarly won elections (due to jobs, snobs, race, pace, and luck) then used their acquired power to attack institutions, often in imperceptible steps.

Leigh catalogues ‘seven deadly sins’ (p.138) indicating that a leader is degrading democracy into an authoritarian regime. However, I felt that the inherent tension between the need for totalitarianism to be global for it to ‘permanently curtail the potential of humanity’ and the inward-looking nationalism of populists, seems to preclude populism as a pathway to a global totalitarian x-risk, although things could still get very bad, and populism can no doubt amplify other risks.

Fixing Politics

Leigh’s roadmap to fixing politics is probably the most disappointing aspect of the book. This is because having identified populism as a driver of x-risk and having identified five causes of populism, he then focuses his remedy on building stronger democracies (presumably to resist degradation by populists) rather than focusing on the underlying causes. Populists win elections in strong democracies and then re-jig the rules to suit themselves. So it’s not so clear how creating better rules is the ultimate solution.

Regardless, we are offered a suite of sensible democratic reforms, tinkering and strengthening of democracies particularly where the necessary continuous tinkering and strengthening has ceased (the last US constitutional amendment favouring democracy was 50 years ago).

Leigh favours mass participation in elections, promoting the compulsory Australian system. He favours convenient voting methods, independent redistricting, and reform of electoral-college systems where the popular vote may not determine who wins. Leigh also favours controls on the export of technologies that can sustain totalitarianism, such as facial recognition systems.

Many of Leigh’s proposals are positive steps, and I support a number, but none of them cut to the heart of the issue, which is the need to address jobs, snobs, race, pace, and luck so that populists cannot win free and fair elections in countries with strong democracies. In only one paragraph on p.153–4 (of 167) does the argument link proposed reforms to these five causes, and only two causes are addressed.

Finale

A final chapter summarises the argument with reference to risk and insurance. X-risks are not a concern because they are likely, they are a concern because they would be unbearable. This is why we need insurance against them. To overcome the tendency for politicians to focus on the likely rather than the devastating, we must resist populism, strengthen democracy, and practice more wisdom. In Leigh’s mind, these traits are synonymous with the philosophy of Stoicism and the political philosophy of John Rawls. We need courage, prudence, justice, and moderation.

‘A stoic approach to politics means spending less time caught up in the cycle of outrage and devoting more energy to making an enduring difference’ (p.164)

Perhaps it is through Stoicism that we address jobs, snobs, race, pace, and luck.

Conclusion

I enjoyed this book, but mostly because it was such an accessible summary of things I’d already learned during seven years’ engagement with x-risk content and the x-risk community. The book provides an accessible, entertaining introduction to x-risks for those not already immersed in the field. It benefitted from the simple style, amusing metaphors and Australianisms.

On the other hand, the solutions proposed, in my view, don’t really address the problems identified. We are offered an ambulance at the bottom of the cliff. Or perhaps a more Aussie take might be that we are advised to piss on our houses to protect them from a bushfire.

However, we often imagine that politicians are unreceptive to long-term issues. What’s the worst that could happen? demonstrates that there are representatives sympathetic to the issues of x-risk and future generations. We can try to leverage these politicians, amplify their voice, and connect such individuals with x-risk academic work via policy work on x-risks. Relevant examples of such work includes:

- Existential Risk: Diplomacy and Governance by the Global Priorities Project

- Risk management in the UK by the APPGFG

- Future Proof by the Centre for Long Term Resilience

- Managing global catastrophic risks policy series

- Policy recommendations appendix in Toby Ord’s book The Precipice

I personally also think it is important to continue to develop arguments that demonstrate why x-risk is a priority here and now, not merely through a longtermist lens. Then we can cast the net as widely as possible, and convince those who will never be focused on the long term. Arguments that highlight flaws in national risk assessment and national risk register processes, and remedy these so that x-risk is rationally included under the extant scope of these devices are valuable, as are cost-effectiveness analyses grounded in the same short-termism standardly deployed in government. My back of the envelope calculations indicate this is an approach ripe for elaboration.

However, the work should extend beyond specific policies addressing x-risks separately or in combination, to ways we can strengthen democracies, and perhaps more importantly, reduce the likelihood of populist leaders emerging in the first place by addressing the issues of jobs, snobs, race, pace, and the role of luck. I was disappointed that What’s the worst that could happen? didn’t finish this analysis, yet to be fair, this is a book that you can read in a day and is a worthy introduction to x-risk for the uninitiated.

Future work and funding

Australia has a relatively new ‘Commission for the Human Future‘ and I have been in favour of similar initiatives in New Zealand.

Earlier in 2021 I unsuccessfully applied for funding to drive such an initiative and you can read my application to the Effective Altruism Infrastructure Fund here.

I’m very interested to talk with anyone who might like to collaborate on, or fund, such a project aimed at understanding and reducing x-risk from a New Zealand perspective.

To support more x-risk content on this blog, please consider donating below:

Make a one-time donation

Make a monthly donation

Make a yearly donation

Choose an amount

Or enter a custom amount

Your contribution is appreciated.

Your contribution is appreciated.

Your contribution is appreciated.

DonateDonate monthlyDonate yearly